A couple of mathematics items:

- Photographer Jessica Wynne has been taking photographs of mathematician’s blackboards, and there’s a story about this in the New York Times. Many of her photographs have been taken here at Columbia, where we happen to have, besides some excellent mathematicians, also some excellent blackboards.

- A non-Columbia excellent mathematician I’ve sometimes written about here is Bonn’s Peter Scholze. If you want to get some idea of the field he works in (arithmetic geometry) and what he has been able to accomplish, a good place to learn is Torsten Wedhorn’s new survey article On the work of Peter Scholze.

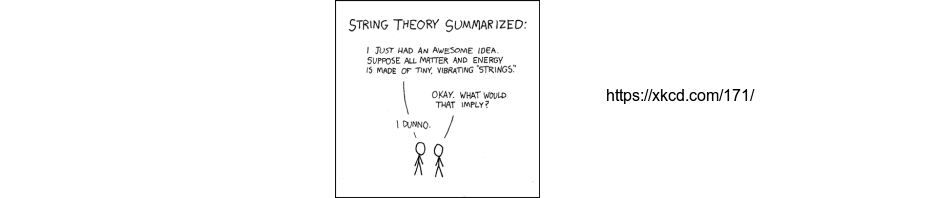

On the string theory front:

- Arguments about the failure of string theory as a unified theory have been going on so long that they are now a topic in the history of science. For detailed coverage of many events in the long history of these arguments, you can consult historian of science Sophie Ritson’s 2016 University of Sydney doctoral dissertation. It and some of her other work is available at her academia.edu website.

- For the latest in content-free argumentation about the failure of string theory unification, Steve Mirsky has a podcast discussion with string theory fan Graham Farmelo (see discussion of his recent book here), in which Mirsky challenges Farmelo about the problems of string theory. Farmelo has spent a lot of time at the IAS and basically takes the attitude that the point of view of certain unnamed string theorists there is what should be followed. I’d describe it as basically “we’ve given up working on string theory unification, but will keep insisting it is the best way forward until someone proves us wrong by coming up with a completely successful alternate idea.”

- For the absolute latest attempt to extract some sort of “prediction” from string theory, see this week’s Navigating the Swampland conference in Madrid. Today there was a discussion session, with results shown of a survey of the views of those attending the conference. Note that, on the contentious topic of the reliability of supposed metastable de Sitter solutions of string theory, the Stanford group defending this reliability does not seem to be represented at the conference. I’ve been trying to understand what picture of physics this research has in mind, given that one main goal is to torpedo the metastable de Sitter solutions, and thus the usual “anthropic string landscape” picture. Looking at page seven, most participants seem to want to replace single field inflation models with more complicated quintessence or multi-field inflation models.

In Hirosi Ooguri’s talk he gives a supposed “unparalleled opportunity for string theory to be falsified”, I gather by claiming string theory somehow implies a small value of r. He quotes Arkani-Hamed as saying that string theorists should have reacted to the bogus BICEP2 measurement of r=.2 by saying “if this is true string theory is falsified.” They didn’t do that. When the topic came up at the time, what they had to say was:Theoretical physicist Eva Silverstein of Stanford says she disagrees that string theory-based models of inflation are in any sort of trouble. “There is no sense in which we are forced to start over,” she says. She adds that in fact a separate class of theories that involve both axions and strings now look promising.

Linde agrees. “There is no need to discard string theory, it is just a normal process of learning which versions of the theory are better,” he says.

Update: Some more math

- Not just physicists, but mathematicians too can get the ridiculous headline treatment, see Number Theorist Fears All Published Math Is Wrong.

- A recent proof of the twin primes conjecture for [polynomials over] finite fields from my Columbia colleague Will Sawin and a collaborator is the subject of a new Quanta article from Kevin Hartnett, see Big Question About Primes Proved in Small Number Systems.

Several physicists now have pieces up explaining why Sean Carroll’s claim that “the Multiverse did it” (i.e. all you have to do is believe in multiple worlds) isn’t a real solution to the measurement problem. Beside the previously mentioned Chad Orzel, there’s also Sabine Hossenfelder and Philip Ball. I agree with Ball’s conclusion:

Here, then, is the key point: you are not obliged to accept the “other worlds” of the MWI, but I believe you are obliged to reject its claims to economy of postulates. Anything can look simple and elegant if you sweep all the complications under the rug.

Update: A video of the discussion session at the Swampland conference is here. It seems that I’m not the only one confused about what assumptions people working on this are making and what they are or are not accomplishing.

I think Ball makes a particularly good point near the end of his post:

That NYT story really makes me wish we hadn’t gone the whiteboard route in our science building.

Blake Stacey (and others),

I’m afraid I need to encourage everyone who wants to discuss many worlds and the Sean Caroll campaign to do so at the other blogs covering this. Glad to see that others are writing about this, and they should be encouraged.

Regarding math blackboards, the ones of Knill teaching at Harvard have been simply inspiring

http://www.math.harvard.edu/~knill/teaching/summer2017/exhibits/blackboards/index.html

I don’t see why people are saying it’s “twin primes over finite fields”, when really it’s twin primes over polynomial rings over finite fields. No one would say we proved the twin prime conjecture over the integers (the OG conjecture) if it was proved over Z[x]. But maybe that’s just me being curmudgeonly.

Peter, a link to a PI colloquium on swampland by Cumrun Vafa

http://pirsa.org/19090116/

David Roberts,

I think in the Quanta article it’s just an artifact of the fact that they can only explain one concept at a time – so they explain twin primes, and then finite fields, and then say “we’re going to combine them” and then say “that doesn’t make sense, finite fields have no primes” so introduce polynomials.

But you’re right that this isn’t technically correct language – I would always say “over F_q[T]” to be as precise as possible, or “over function fields” to be a little more colloquial.

It’s fun to try to justify it – once could argue that, because the twin primes conjecture is only interesting in Dedekind domains, the phrase “twin primes conjecture” carries an implicit “for Dedekind domains” or even better “for principal ideal domains” so “over finite fields” means “for principal ideal domains over finite fields, i.e. polynomial rings”.

I guess Peter got it from the Quanta article but I’m sure he can argue his own case if he so desires.

David Roberts/Will Sawin,

Yes, I was using a sloppy terminology, following Quanta, but I think it’s justifiable. Anyone who knows something about this field will know what is being meant. The standard math terminology for these things (“function fields”) has always seemed to me to be opaque to anyone who hasn’t heard it before, giving no indication of what kind of “functions” this has to do with.

A couple of years ago, Buzzard saw talks by the senior mathematicians Thomas Hales and Vladimir Voevodsky that introduced him to proof verification software that was becoming quite good. With this software, proofs can be systematically verified by computer, taking it out of the hands of the elders and democratizing the status of truth.

An apparent red-guard mentality concerning the need of canceling stale “elders” and implementing forced “democratization” (presumably after a “necessary conversation” has been had; with said conversation hopefully not ending in a short walk behind the shed)?

It’s almost as if I was reading Vice.com.

Oh wait! Never mind.

(And I absolutely like theorem-proving by machine; but I also agree with Michael Harris in Why the Proof of Fermat’s Last Theorem Doesn’t Need to Be Enhanced)

Yatima,

What I think is more remarkable is the identification of “democratizing” with replacement of the judgement of human beings by that of machines.

I think this puts the matter well.

Years ago, I read a comment by Michael Ashbacher about the classification of the finite simple groups. He wrote that the classification theorem was so long, the probability of an error in it approached 1, but the probability of any error being uncorrectable was nearly zero. There’s just so much found out in the course of proving the result that the final structure is basically robust, in a way that mechanistic checkability doesn’t really capture. The greater concern with the CFSG is that it wasn’t written in any single place, let alone “democratized” so that people even slightly less specialized in the field could approach it. (The “second-generation” proof, revised and systematized with the benefit of hindsight, runs to eight volumes so far and still isn’t complete.)

(*Aschbacher)

I agree with David Roberts: “twin primes conjecture for finite fields” made no sense to me, whereas “twin primes conjecture for polynomials over finite fields” should make sense to everyone.

OK, OK, I give in. “finite fields” edited to “polynomials over finite fields”.

I am more concerned with the exposition of the classification of finite simple groups than its correctness. Will future generations be able to make sense of this voluminous information? For example, some of Michael’s work requires seriously heavy sledding to understand — he erroneously assumes the reader is at least half as smart and knowledgeable as he is. (Back in the day, I heard loud complaints about the difficulty of understanding my own very small contributions to the subject, although I don’t think exposition was the real issue.) We should leave future generations with a reasonable chance of understanding the classification and making their own improvements and judgements about correctness when key contributors are unavailable to answer questions. There has been a lot of work on improving the situation, but I wonder whether it is sufficient. There is much understood by experts that is not spelled out on the printed page — Serre has made his share of complaints about the difficulties.

I do not believe this situation is unique to finite group theory. Too much knowledge is in the form of folklore, talks to an inner circle, or private conversations and correspondence. Fortunately, the internet has improved the distribution of information, e.g., the arXiv, videos of lectures, unpublished books, papers, and course notes posted on websites, and online availability of some letters, such as those from Langlands to Weil and Serre.

I agree with Thurston that human understanding should be our goal:

https://arxiv.org/pdf/math/9404236.pdf

Creating a proof in software of the classification would be a ridiculously large undertaking. I doubt the result would be easier for humans to digest.

I was not too impressed by Farmelo’s recent book although I loved his book on Dirac. Basically his attitude seems to be, “I am not an expert on this topic, so I am just going to assume the experts know best.”

Wavefunction,

Yes, Farmelo’s attitude appears to be that “if the most illustrious theorist at the IAS tells me that his approach to going beyond GR to a unified theory is the best one, it must be the best way forward”. An obvious counterargument is that if he had been a visitor at the IAS during an earlier period, Einstein would have told him that the ideas about unification he was working on then were the best way forward. Einstein’s successors at the IAS today I believe are just as wrong now as he was then.

As a pure mathematician who has used computer-assisted proofs for almost 40 years, perhaps I can comment on the article “Number theorist fears all published math is wrong”. Of course all published math is wrong, just as all published physics is wrong. But this is to take an absurd philosophical position. And as many commenters above have noted, pedantic approaches to proof are as foreign to real mathematics as they are to physics. There are mathematicians who loudly proclaim that their proofs are “computer-free” as if it was a virtue – and either make lots of mistakes that good use of a computer would have corrected, or silently use computers for any calculations they regard as “routine”, while disparaging anyone else’s use of computers that they don’t understand. There are proofs that are regarded as “computer proofs” (such as the four-colour theorem) that are conceptually easy to understand, where the use of the computer consists of (a) finding a good way of dividing the problem into hundreds (originally thousands) of separate cases, and (b) systematically working through the cases with a handful of routine methods. There are proofs that are regarded as “human proofs” (such as the classification of finite simple groups) that are conceptually very difficult to understand, where the use of a computer has very little to contribute. Formalising such a proof in order to “check” it is an interesting exercise in logic/computer science, but who checks the checker? Proofs are checked by being part of the culture of mathematics, being used and re-used, re-worked, re-interpreted, taught to the next generation and continually checked against experiment. Just as in physics. And computers have always been used in mathematics – they just used to be human beings, that’s all.

Dear Peter,

Regarding Farmelo’s supposed attitude that “if the most illustrious theorist at the IAS tells me that his approach to going beyond GR to a unified theory is the best one, it must be the best way forward,” and yours “obvious counterargument is that if he had been a visitor at the IAS during an earlier period, Einstein would have told him that the ideas about unification he was working on then were the best way forward,”

There’s a significant difference. In Einstein’s late days, most of his colleagues who worked on unified field theories, using extensions of Riemannian geometry to include electromagnetism, like Eddington or even Schrodinger, already moved on. Today, it’s not only a lonely however illustrious theorist at the IAS that believes that the best approach forward is a particular framework for unification, but a significant percentage of a whole generation of particle energy theorists, working at many illustrious places, particularly in the US.

Another matter. Looking at this Swampland program (the colloquium at PI linked above is a good summary), it seems that Vafa and his collaborators are arguing that the whole approach to EFTs and naturalness arguments used to argue for SUSY at the LHC, failed since their theories, even if they looked OK from a QFT point-of-view, are not in the string theory landscape at all.

Jackiw Teitelboim,

Yes, the situation is different. In the late stages of Einstein’s career there were plenty of new experimental results pointing to more promising things to think about than generalizations of the Levi-Civita connection. Most people sensibly followed what experiment was telling them and ignored Einstein. If we were in the current situation back in the late 1940s, it’s hard to know how many people would have followed Einstein. Quite possibly a “significant percentage” of theorists would have been arguing that generalized connections were the best way forward.

The “significant percentage” argument right now is useless, you can find an equally significant percentage who don’t think string theory unification is the way forward. As always, judging an issue like this by numbers of followers isn’t a very good idea. I’ve said before that I think the best argument for “string theory unification is the best way forward” is that Witten thinks so. Given zero experimental guidance, following the best theorist you can find may be the best thing to do, but it’s a good idea to keep in mind this doesn’t always work, with Einstein a good example to keep in mind. In his early 30s Einstein was seduced (for good reason) by a certain vision of the geometrization of physics, one he should not have stayed so attached to for so long. At a very similar age Witten was seduced by a vision of string theory unification, and I think the psychology of why he won’t give that up may not be that different than Einstein’s story.

About the Swampland program, I do recommend watching the recent discussion session mentioned earlier:

http://150.244.223.31/videos/video/2098/

The degree to which people are flailing about and unsure of what arguments they can trust and build on is remarkable.