First some math news:

- An anonymous commenter claims here that the 2026 ICM will take place in Philadelphia. I had heard that a US group was submitting a proposal, so this rumor is plausible.

- Many mathematicians and physicists have signed an Open Letter on K-12 Mathematics pointing to problems with attempts to reform mathematics education such as the California Mathematics Framework. For more about this, see the blog entry posted here and on Scott Aaronson’s blog, and more detail here.

While I’ve always had some sympathy for the general idea that there’s much that could be changed and improved about the US K-12 math curriculum, there’s a huge problem with all proposed changes based on the “algebra/pre-calculus/calculus sequence is too hard and not relevant to everyday life” argument. Students leaving high school without algebra and some pre-calculus are put in a position such that they’re unequipped to study calculus, and calculus is fundamental to learning physics. Without being able to learn physics, a huge range of possible fields of study and careers will be closed to them, from much of engineering through even going to medical school. Whatever change one makes to K-12 math education, it shouldn’t leave students entering college with a severely limited choice of fields they are prepared to study.

- Davide Castelvecchi at Nature has a story about machine learning being useful in knot theory and representation theory. Given my personal prejudice that hearing endlessly about how AI and machine learning will take over everything is just depressing, I’m trying to ignore this kind of thing. But, together with stories like the success of proof assistants in solving a problem posed by Scholze, it’s harder and harder to believe what I would like to believe (that this is all a bunch of hype that should be ignored).

For some physics items:

-

Jim Baggott has an excellent article at Aeon about the “Shut up and calculate” meme, featuring a retraction by its originator, David Mermin

In a quick follow-up discussion with me in July 2021, Mermin confessed that he now regrets his choice of words. Already by 2004 he had ‘come to hold a milder and more nuanced opinion of the Copenhagen view’. He had accepted that ‘Shut up and calculate’ was ‘not very clever. It’s snide and mindlessly dismissive.’ But he also felt that he had nothing to be ashamed of ‘other than having characterized the Copenhagen interpretation in such foolish terms’.

- For some wisdom on the thorny issue of how to relate Euclidean and Minkowski signature metrics in gravity, see the recent IAS lecture by Graeme Segal on Wick Rotation and the Positivity of Energy in Quantum Field Theory.

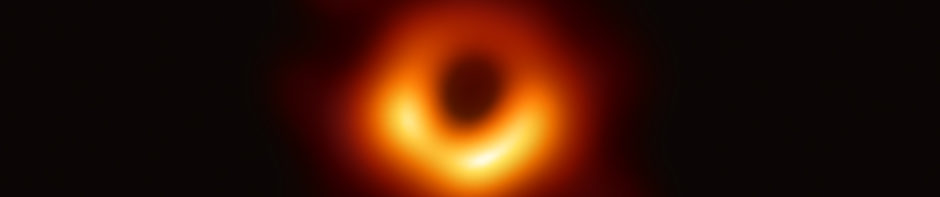

- In fundamental theoretical physics these days, it’s quantum information theory all the time, with conferences around now here, here, here, and here. I can’t figure out what the relevance of any of this is supposed to be to actual models describing reality. Best guess would be that this is supposed to “solve the black hole information loss paradox”, although in that case Sabine Hossenfelder has some apt comments here.

- For something more inspirational, see Natalie Wolchover’s long piece at Quanta on the JWST.

Update: For more from Geordie Williamson about the math/AI story, see here. For more about the problems with the California Mathematics Framework and its co-author see here.

Not sure if you will want to change it, but your first sentence begins “First some math news: …”. Then later you say “For some math items: …”. But they seem to be about physics. My guess is that the second one you will replace the word “math” with the word “physics”.

Jim Akerlund

Machine Learning:

Is this the problem posed by Scholze that you mentioned, Peter?

https://xenaproject.wordpress.com/2020/12/05/liquid-tensor-experiment/

So it seems to me that the problem was to formalize/verify Scholze’s proof of a theorem in condensed math—not to prove a conjecture. I don’t think the computer contributed any original or creative insight here. In principle, the formalization could have been done with a pen and (a lot of) paper, so the computer here served as more of a digital bookeeping mechanism than an artificial intelligence! But I am woefully unknowledgable about this whole project, so if the computer supplied some crucial step in Scholze’s proof, I would be glad to hear it!

Like you, I find the prospect of machine learning surpassing human beings in our mathematical pursuits quite depressing. I am confident however that us humans will continue to supply creative insights and perspectives on hard problems that machines are incapable of providing. Could computers have originated schemes and etale cohomology? I find that very hard to imagine.

Education:

I don’t support the California reforms whatsoever. In particular I am appalled by the notion of doing away entirely with programs for gifted students, not least because of my memories of being a child prodigy and forced to endure fourth-grade mathematics. At the same time, I question whether calculus should be the goal of a high-school math education. As a mathematician, it seems like a rather arbitrary goal. If I were to reform math education in America—indeed, to rebuild it from scratch—I would spend a lot more time inculcating a sense of logic and the skill to read and write proofs in abstract mathematics. I see no reason why you can’t teach set theory, ZFC, infinite cardinals/ordinals, topology, groups/rings/fields or even differentiable manifolds and some of the basic elements of Lie groups and representation theory to high-school students, if they were taught appropriately. It would be a much better use of time than explaining how to compute the integrals of different rational functions by hand, and it’s certainly more interesting, beautiful and foundational material.

Regarding the quantum information theory point, did you see that at Strings 2021 this year, Witten said that quantum information theory could lead the way to an explanation of what string theory “really is”? And then he got into an argument with Cumrun about it? I feel like that for me was the final demonstration of what an all-around waste of time string theory has been and now they’re trying to shoehorn every popular fad and meme into it to maintain relevance. It’s sad really.

P.S. not sure if you already mentioned this earlier, I’m only a casual reader, sorry if this is a redundant point

On the education item: I don’t support the California reforms and lent my name to the petition. I think the issues are more subtle and widespread, though. I left academia about two decades ago but have steadily hired a large number of PhD/MSc level people from the “hard sciences” in that time. There’s a consistent trend I’ve seen within the interview process that often separates people with US-based educations prior to university versus the others. It’s stranger today as everything is “data science”, “machine learning”, or “artificial intelligence”. I can’t imagine a CV in previous times simply stating “kalman filter” or “support vector machine” or “particle filtering” so independent of a goal or outcome. I recently spoke to someone with a bunch of machine learning language wrapping a post-doc in numerical GR. I asked and was told “If I don’t talk about machine learning I don’t get grant money.”

As a father of two children I’ve seen large changes in education that roughly fall into two camps: first is the wide spread adoption of “common core”, similar to California, and second is the influence of all this new-fangled training that upsets the progressive (graded) aspect of education and really creates these strange ways topics are introduced. This seems to hit math and reading the most obviously. The former is the reason why I put my children in private school. My 8th grader is in algebra I. I expect that he will have calculus and linear algebra by graduation. I am less concerned with him having calculus proper and more interested in mathematical reasoning beyond the rote memorization of rules that are used in fluency during earlier years or primary and early secondary school. I can tell what they are doing, pedagogically, is trying to introduce more abstract thinking in earlier years, but it seems very inconsistent and contrived (as opposed to abstract). It is a hodgepodge of ideas that seems to be a dangerous experiment with something that was already presenting a challenge (in the US). More broadly than California I have seen efforts to level the playing field not by creating more opportunity but by removing material deemed challenging. Often this is accompanied by some kind of DEI statement which limits the debate. Open to change and evolving the curriculums but it all seems a bit to politicized and arbitrary. I’ve also witness new-fangled material displace material with little debate (more broad than maths, eg reading lists) And if jobs are the litmus test the reality remains that I produce many jobs for PhD level quantitative researchers and I am not hiring many people educated at US primary and secondary schools.

The comments in this blog aren’t the right place for a discussion on this topic so I will stop here, but certainly wanted to voice my support and solidarity with colleagues and others mentioned above.

BTW, I recall doing some work on applying knot theory to protein folding many years back. The difficulty was in the computing power. Several years later I started building proprietary systems that were more and more powerful. There’s a ton of marketing behind Google (just as there was with IBM). Not to diminish their work but when the details of AlphaGo emerged it was remarkably similar, if not identical, to a proprietary system I have been running on massively parallel GPU cluster for years. My point being that while there’s been progress, largely due to speed and the availability of data, very little has changed otherwise. Sometimes we joke that it’s still all OLS despite the hype. It’s fun to write down Ax=b on a quantum computer. After a little while it becomes clear that it’s a perfectly good classical problem whether or not we have error correction and massive qubits.

Jim Akerlund,

Fixed.

All,

Sorry, but I can’t moderate a general discussion of everyone’s ideas about how math should be taught. If it’s not very specifically about the Open Letter, it’s off-topic.

Rando M.,

I did mention this on the blog previously, see the end of this posting:

https://www.math.columbia.edu/~woit/wordpress/?p=12381

Kontsevich and Segal paper https://arxiv.org/abs/2105.10161

-drl

Dear Peter

The Scholze result verification has nothing to do with ML, the basic “technology” in proof assistants is type theory which is strongly related to mathematical logic.

These systems have had a series of magnificent achievements in recent years . ML has obviously also been successful but, as pointed out, this seems to be the first time that it yields plausible and serious math conjectures. In short, both developments are serious, the first is not “depressing”, regarding the second, I sympathize with your feelings, but that’s life.

Eitan Bachmat,

I understand that the proof assistant business is different than the ML business, but I fear that in both cases there’s not much I can do about my reaction to seeing the best human minds unable to compete with the machine.

On both fronts there’s probably a lot of hype and the best human minds are still in many ways far ahead of the machine. But rather than looking into this in detail, seems like a good idea to just avoid thinking about it…

There’s actually a source for the ICM 2026 news: the latest IMU Circular Letter to Adhering Organizations. See here: https://www.mathunion.org/fileadmin/IMU/Publications/CircularLetters/2021/IMU%20AO%20CL%2025_2021.pdf

Peter, I wouldn’t think of it as “human minds being unable to compete”. Unlike Chess or Go, the goal of mathematics is inherently open-ended, being exploration and discovery based on aesthetic taste (even if sometimes motivated by practical concerns). Computers will surely play an increasing role in assisting humans, both by reducing tedium and aiding exploration, but there are sufficient depths unreached for this to mean richer mathematics rather than a trivialisation of the field.

I think there is a plausible theory that one of the two machine-learning-in-mathematics success stories you mention was not really a machine learning breakthrough, if you take the point of view that to count as a success of machine learning it must not be something that could have been achieved with a similar amount of effort using more classical statistical tools like linear regression.

Specifically, the knot theory one – I’m not a statistician, but Figure 3b in the Nature paper looks like the kind of linear correlation between two variables of interest that could be found by doing a simple linear regression of all the algebraic invariants on all the geometric invariants, and then the second relevant variable could be identified by further analysis, like adding quadratic terms.

Even if I were a statistician, there would be something silly about complaining that you could have achieved something a different way when, well, you didn’t, but given that advances in mathematics achieved by machine learning (justifiably) get significantly more attention than similar advances in mathematics achieved a different way would, I think some effort must be put into considering this possibility.

For the representation theory one, my guess (again, not a statistician) is this is probably not true. The message-passing neural network is an elegant system for learning properties of graphs, and I don’t know of any classical statistical tools that seem likely equally helpful.

I don’t think the proof assistants used in the Liquid Tensor Experiment involved any machine learning, although machine learning tools in that setting are being worked on and I imagine they will be useful tools for computer-verified mathematics researchers soon.

But overall I don’t think the position of hoping that machine learning won’t help humans do mathematics is really tenable. Is mathematics really that much harder than chess? Of course quite a lot of what has already been written is hype, including some works by authors that seem to understand neither the field of mathematics they are working in nor the basic principles of statistics. But saying that the field is inherently hype because of that is like saying Florida doesn’t exist because there were a lot of Florida real estate scams.

Will,

I should make clear that I’m not saying these stories are hype, quite the opposite: what’s bothering me is that they appear to not be hype. Maybe the future of mathematics is in close collaboration between human minds and computers, but I’m personally not onboard with that, just because to my mind we’re all already too close to computers.

@Peter Woit – the story with proof assistants is very far from ‘the human cannot compete with the machine’. We are seriously far from being able to use proof assistants for helping with pretty much any act of creation of proof.

However, if you have created a long and complicated proof, where you perhaps have a great many cases which you really hope are exhaustive, or where the definitions are subtle and being used in ways which are close to the limits, you might reasonably be worried that you are missing something.

You could deal with that worry by ignoring it (as is traditional), but this leads occasionally to errors, including sometimes plain false theorems. Or you could deal with it by blowing up your 200-page human-readable paper to 2000 pages which a human can in principle read but in practice won’t, by filling in every single detail and being very careful to do every little calculation explicitly. Or, now, you can spend a still very large amount of time (much longer than writing 200 pages, probably a bit less than writing 2000) by putting all the details to a computer and asking it to formally verify the proof.

The major gains at the moment are that you can be fairly confident the computer’s answer is accurate, and you can afford to write inelegant stuff and skip the prose for the proof assistant, because no human will read it and judge you. And (minor) for a class of basic calculations the proof assistant can do it for you. The major loss (which hopefully will get better over time) is you have to teach the computer a lot of stuff that would be assumed knowledge for a journal paper, but which no-one previously got around to putting to the proof assistant’s knowledge.

I’m sure the ML community thinks that one day they will bolt ML on to proof assistants to replace mathematicians, but this day is definitely not here yet. One major problem, compared to games, is that when you play a game you have two sides, and the side that plays the better strategy wins – you can start with a pathetic strategy, try to learn better responses, and repeat millions of times to get somewhere, with these millions of repeats being the computer playing itself with different variations of its current strategy and seeing which things work better.

In mathematics (and most things, actually) you don’t really have this. You can ask the computer to prove something; if its strategy is pathetic, it will simply fail and there is no good way to say ‘now improve’ in any directed way, it doesn’t get a score for its failure (at least not without a human intervening) to say which variation of strategy was best. Similarly if it succeeds it’s not clear how to give the computer a ‘next problem’ that’s at the right level to get improvement, again at least not without human intervention; and humans simply don’t have time to intervene all the time at the rate current ML programs can learn.

Perhaps as a mathematician who has been involved in computer-assisted mathematics for more than 40 years I might be allowed to comment? At that time, computers could not be said to provide artificial intelligence. What they provided was artificial stupidity. They were therefore very useful in augmenting our natural supply of stupidity, and making far more mistakes far more quickly than we could do ourselves. This was, and still is, a huge benefit to mathematicians, and speeds up progress in all sorts of ways.

I may be wrong, but my impression is that a lot of what is called artificial intelligence these days is really just a more sophisticated version of artificial stupidity. And making connections that people haven’t seen before is exactly what artificial stupidity should be expected to do: if people make these connections, they reject them as stupid, and they are lost. It is only because they are made by “artificial intelligence” that people take them seriously, and think about them.

Thanks for the mention.

Regarding your question about the “relevance” of quantum information. It’s the easiest way people in the foundations can get funding through the many current quantum initiatives. We’ll therefore almost certainly see more of this, also quantum simulations (you know, wormholes and LQG and that kind of thing).

Peter,

You may very well consider this off-topic, and if so, I am sure you will let me know.

It is interesting that quite a few people from Stanford have signed the Open Letter on K-12 Mathematics, since the main person behind the new California Mathematics Framework, Prof. Jo Boaler, is at the Stanford Graduate School of Education. Boaler had a serious run-in with mathematicians in the early 2000’s. Wayne Bishop, R. James Milgram, and Paul Clopton criticized her work and accused her of scientific misconduct, a charge that Stanford dismissed. Anyone interested in the details of the criticisms can find them here: https://www.nonpartisaneducation.org/Review/Essays/v8n5.htm and https://nonpartisaneducation.org/Review/Essays/v8n4.htm . According to Bishop, et al., one of the main effects of Boaler’s curriculum changes at a high school that was part of a study she ran was to significantly increase the number of students from that school who needed to take remedial mathematics courses when they got to college, just the kind of thing the people behind the open letter are worried about.

Mark Hillery,

Not off-topic at all. For more about Boaler, someone pointed me to this

https://fillingthepail.substack.com/p/tessellated-with-good-intentions

On the subject of math news — very sad news, as it happens — Jacques Tits, a distinguished mathematician who won both the Wolf and Abel Prizes, has died (On Dec. 5, 2021, at the age of 91.).

Though I did not know him personally (Nor can I claim any detailed knowledge of his work.), I do not hesitate to say that — as both man and mathematician — he was very highly regarded.

In particular — in regard to matters mathematical — he will be remembered for his work on buildings (Curiously enough, “building” is an actual technical term — as is, I believe, “apartment”!).

I believe he may also have been the first to claim a sighting of what is generally regarded as the unicorn (Some might say “Loch Ness Monster” or “Bigfoot” would be more apposite monikers!) of abstract algebra: the legendary * field with one element * (An idea that, to the best of my knowledge, is still viewed by most mathematicians as less-than-fully fleshed-out — and is arguably as close as math gets to the sound of one hand clapping.).

I believe he also helped guide the mathematical development of a young Pierre Deligne.

He will be missed.

A short and moving obituary by Broué at the Société Mathématique de France :

https://smf.emath.fr/smf-dossiers-et-ressources/broue-memoriam-tits

I would like, if I may, to comment on the achievements of Jacques Tits in regard to the unification of mathematics. He did more than anybody else to unify the fields of finite, algebraic and Lie group theory, and the corresponding finite, algebraic and differential geometries. Tits’s geometries, buildings and all the rest of it seem to be regarded as somewhat irrelevant to mainstream differential geometry, but this is far from true. He saw, more clearly than anyone, the fundamental structures that are central to Klein’s vision of a unified algebra/geometry. It is a pity that others have chosen to use their power and influence to drive a wedge between algebra and geometry, which Tits sought to unite. I met him a few times in my career, and he was unfailingly encouraging and appreciative. I was astonished to find that not only did he know of my work, but he knew it well, and appreciated it for what it was.

This may be a bit off topic, but there is a really nice obituary of another of the giants of mathematics, John Conway, at

https://royalsocietypublishing.org/doi/10.1098/rsbm.2021.0034.

In terms of physics, there is mention of the relationship of the Conway-Norton Monstrous Moonshine to string theory via the work of Richard Borcherds, and also of the Free Will Theorem proved with Simon Kochen. The former, I suspect, has little to say about real physics, but the latter is a close relative of Bell’s inequality, and I suspect is fundamental, although clearly it has nothing whatever to do with free will.

Also semi-topic, (but since the Bogdanov affair was once extensively discussed here): Both Bogdanov-Twins have now died due to Covid.