First something really important: chalk. If you care about chalk, you should watch this video and read this story.

Next, something slightly less important: money. The Simons Foundation in recent years has been having a huge (positive, if you ask me…) effect on research in mathematics and physics. Their 2018 financial report is available here. Note that not only are they spending \$300 million/year or so funding research, but at the same time they’re making even more (\$400 million or so) on their investments (presumably RenTech funds). So, they’re running a huge profit (OK, they’re a non-profit…), as well as taking in each year \$220 million in new contributions.

Various particle physics-related news:

- The people promoting the FCC-ee proposal have put out FCC-ee: Your Questions Answered, which I think does a good job of making the physics case for this as the most promising energy-frontier path forward. I don’t want to start up again the same general discussion that went on here and elsewhere, but I do wonder about one specific aspect of this proposal (money) and would be interested to hear from anyone well informed about it.

The FCC-ee FAQ document lists the cost (in Swiss francs or dollars, worth exactly the same today) as 11.6 billion (7.6 billion for tunnel/infrastructure, 4 billion for machine/injectors). The timeline has construction starting a couple years after the HL-LHC start (2026) and going on in parallel with HL-LHC operation over a decade or so. This means that CERN will have to come up with nearly 1.2 billion/year for FCC-ee construction, roughly the size of the current CERN budget. I have no idea what fraction of the current budget could be redirected to new collider construction, while still running the lab (and the HL-LHC). It is hard to see how this can work, without a source of new money, and I have no idea what prospects are for getting a large budget increase from the member states. Non-member states might be willing to contribute, but at least in the case of US, any budget commitments for future spending are probably not worth the paper they might be printed on.

Then again, Jim Simons has a net worth of 21.5 billion, and maybe he’ll just buy the thing for us…

- Stacy McGaugh has an interesting blog post about the sociology of physics and astronomy. His description of his experience with physicists at Princeton sounds all too accurate (if he’d been there a couple years earlier, I would have been one of the arrogant, hard-to-take young particle theorists he had to put up with).

McGaugh’s specialty is dark matter and he has some comments about that. If you want some more discouragement about prospects for detecting dark matter, today you have your choice of Sabine Hossenfelder, Matt Buckley, or Will Kinney. I don’t want to start a discussion of everyone’s favorite ideas about dark matter, but wouldn’t mind hearing from an expert whether my suspicion is well-founded that some relatively simple right-handed neutrino model might both solve the problem and be essentially impossible to test.

- Lattice 2019 is going on this week. Slides here, streaming video here.

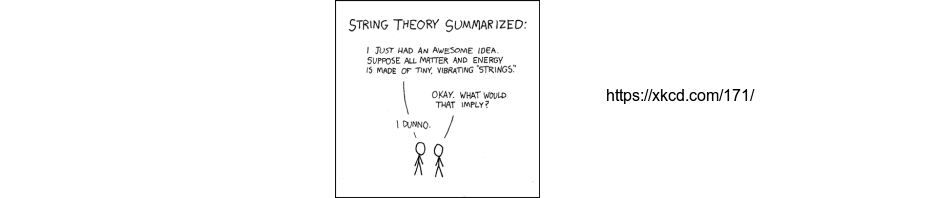

- Strings 2019 talk titles are starting to appear here. I’ll be very curious to hear what Arkani-Hamed has to say. His talk title is “Prospects for contact of string theory with experiments (vision talk)” and while he’s known for giving very long talks, I don’t see at all how this one could not be extremely short.

On a more personal front, yesterday I did a recording for a podcast from my office, with the exciting feature of an unannounced fire drill happening towards the end. Presumably this will get edited out, and I’ll post something here when the result is available.

Next week I’ll be heading out for a two week trip to Chile, with one goal to see the total solar eclipse there on July 2. Will start out up in the Atacama desert.

Update: John Horgan has an interview with Peter Shor. I very much agree with Shor’s take on the problems of HEP theory:

High-energy physicists are now trying to produce new physics without either experiment or proof to guide them, and I don’t believe that they have adequate tools in their toolbox to let them navigate this territory.

My impression, although I may be wrong about this, is that in the past, one way that physicists made advances is by coming up with all kinds of totally crazy ideas, and keeping only the ones that agreed with experiment. Now, in high energy physics, they’re still coming up with all kinds of totally crazy ideas, but they can no longer compare them with experiments, so which of their ideas get accepted depends on some complicated sociological process, which results in theories of physics that may not bear any resemblance to the real world. This complicated sociological process certainly takes beauty into account, but I don’t think that’s what is fundamentally leading physicists astray. I think a more important problem is this sociological process leads high-energy physicists to collectively accept ideas prematurely, when there is still very little evidence in favor of them. Then the peer review process leads the funding agencies to mainly fund people who believe in these ideas when there is no guarantee that it is correct, and any alternatives to these ideas are for the most part neglected.

Update: I think John Preskill and Urs Schreiber miss the point in their response here to Peter Shor. Shor is not calling for an end to research on quantum gravity or saying it can’t be done without experimental input. The problem he’s pointing to is a “sociological process” and so potentially fixable. This problem, “collectively accept[ing] ideas prematurely”, not realizing the difference between a solid foundation you can build on, and a speculative framework that may be seriously flawed is one that those exposed to the sociological culture of the math community are much more aware of. Absent experimental checks, mathematicians understand the need to pay close attention to what is solid (there’s a “proof”), and what isn’t.

In France too we have better quality chalk than in the US.

Thanks for the link to Stacy McGaugh’s blog post, I found it interesting. I’ll also be traveling to see the eclipse to Chile; I’ll be near the La Serena area (hopefully the weather will cooperate and will see little or no clouds!)

Supernaut,

I’ll be staying in La Serena, no plan yet for where we’ll try and see the eclipse, will depend on cloud situation that day.

The timeline has detector construction starting in 2031, with HL-LHC shutdown likely around 2035, so there isn’t that big an overlap. I believe (but can’t find a reference now) that the timelines also all have the expensive tunnel excavation starting after HL-LHC shutdown.

wrt funding, unlike the US National Labs, CERN can borrow money to spread out spending peaks, and its funding is secure enough that it can get a good interest rate. Both LEP and LHC construction were partially funded that way. Any of the FCC proposals will still be a stretch, so the timeline also includes 3 years to work out the funding strategy and another 3 to get in-principle agreements.

Hi Peter,

La Serena is really awesome, but cozy San Pedro de Atacama and its surroundings should be the best place in Chile to see such a thing. Of course it depends on the cloud situation, but if I remember correctly the Atacama desert is the driest place on Earth. Also, don’t miss a trip to Valparaíso if you get a chance.

Dan Riley,

Thanks, I wasn’t aware there was ability to borrow against future funding, that would help.

The documents I was looking at said HL-LHC 2026-2036/7 and for the FCC-ee, see figure 19 of

http://cds.cern.ch/record/2653669/files/CERN-ACC-2019-0003.pdf?version=2

This has construction starting 2029 (tunnel) and 2031 (machine + detectors), physics operation starting 2039.

If there is some way to fund this, it seems likely it would involve stretching out this schedule.

JE,

Problem is, the track of the total eclipse is fairly narrow, thus the need to be in the area around La Serena on July 2. Your other suggestions are encouraging, since our plan is to spend a few days in San Pedro de Atacama before the eclipse (any observatories nearby looking for a visitor?) and a day or two in Valparaiso afterwards.

Many readers of this blog already know about my work on the history and sociology of chalk in mathematics. For those who don’t: it’s a topic of serious academic research, with lots of fascinating questions and findings!

Here’s the piece I wrote for the Best Writing in Mathematics series: http://mbarany.com/DustyDisciplineBWM15.pdf

Here’s the longer article it’s based on: http://mbarany.com/Chalk.pdf

And here’s a short interview I did on the subject: http://www.concordmonitor.com/x-6888872

About almost-impossible-to-detect right-handed neutrinos—my suspicion is that theses models have been undeservedly neglected, to some degree, by theorists, just because there is no way that experiments would ever be able to detect it.

This is the presumably the same phenomenon that leads theorists to predict particles that can be detected by the next generation of accelerators. Somebody should give it a name, and then we will be better able to correct for this bias.

The japanese chalk quality is fantastic, the multiple moving blackboards a thing to marvel. But woof!, the handwriting of many many mathematicians is so bad, they let us down most of the time. “You can’t make a silk purse out of a sow’s ear”.

Regarding right handed neutrinos: You need the dark matter to be around today, whereas heavy right handed neutrinos decay to Higgs + regular neutrino. The requirement that they be stable on cosmological time frames constrains the possibilities, which pushes you to lower masses (which undercuts the seesaw motivation, if you care).

You then have to figure out how to produce sterile neutrinos in the early universe. Not only do you have to get the right amount of them, but they can’t be relativistic because the extra contribution to N_eff screws up nucleosynthesis. It’s challenging to actually get them to be cold dark matter; most models end up with at least a sizeable proportion as “warm” dark matter- which is (currently) observationally ok- perhaps even favored if you believe some structure surveys. Getting this to happen, though, generally requires them to be produced resonantly- meaning that you have to arrange by hand for at least two to have nearly degenerate masses. I don’t think non-resonant production is entirely dead, but that case tends to be hotter. (The other option is to introduce some other particle that can decay to them- but then you’ve lost some of your simplicity.)

So, I’m not sure about your opinion about where “relatively simple” ends, but IMHO all the simple options are relatively warm, which are being studied via structure formation.

Summary of the state of the art is in this white paper:

https://arxiv.org/pdf/1602.04816.pdf

Peter:

I totally agree with your update. I’m not saying we should give up thinking about quantum gravity. I’m saying that theoretical physicists should think very carefully about what they “know” about black holes, information loss, AdS-CFT, and string theory, and see whether their evidence is as convincing as they think it is. I don’t think it is.

Some evidence that the sociological process is broken:

1. The conventional wisdom that Susskind’s theory of complementarity explained the information loss paradox, which had been accepted by many high-energy physicists for years, broke down when the AMPS paper showed it was incompatible with basic principles of quantum information theory.

2. David Poulin and John Preskill have done some research on how you might be able to modify quantum field theory to obtain a non-unitary theory, thus accommodating black hole information loss. See this presentation. It seems to me that nobody is paying any attention to this because they “know” that the universe is unitary because AdS-CFT.

3. I don’t see how the paper of Shenker and Stanford, “Black Holes and the Butterfly Effect” and the idea that the CFT is a quantum error-correcting code can possibly be compatible. I’ve talked with people who think these two papers are both correct, and who really should know what they’re talking about, but I haven’t gotten any answers that I’ve found satisfactory. Maybe I don’t understand AdS-CFT well enough (I barely understand it at all), but my impression is that they’re asking quantum error correcting codes to behave in ways that are impossible. I’d be happy to go into this in more detail by email.

Peter Shor,

Thanks for the examples of the problem you’re pointing to. For better or worse, I’ve spent a lot of time trying to understand how string theory unification is supposed to work, enough to clearly see why it doesn’t work, and to feel comfortable writing about that problem. In the case of the supposed relation of quantum information theory and quantum gravity, I’ve yet to even see how this is supposed to work. I can’t see what specific plausible proposals are behind the mantras “space-time is doomed”, “quantum gravity is emergent” and “it from qubit”, so wouldn’t even know where to begin to try and evaluate the claims being made for such ideas.

It would be great if someone could write up clear explanations of this research program, what its specific proposals and goals are, what has been achieved, what has been found to not work. You should start a blog!

Unfortunately, there are a lot of papers about the relationship of quantum information theory and quantum gravity that I don’t understand. I’m not entirely convinced they have any interesting content, but I don’t understand them well enough to say that they are all just Zen-koan-like slogans à la Urs Schreiber.

The big successes of this relationship so far, in my opinion, are (a) the AMPS paper

showing that Susskind complementarity cannot be correct as it was originally envisioned and (b) the Almheiri-Dong-Harlow paper showing that the Ryu-Takayanagi formula doesn’t lead to fundamental contradictions in physics as long as you postulate that the CFT is a quantum error-correcting code.

More to the point of my answers to John Horgan, the AMPS paper claims that one of the following four assumptions is false (I’ve split their assumption ii into two pieces):

(i) Hawking radiation is in a pure state (i.e., unitarity is not violated),

(ii-a) the information carried by the radiation is emitted from the region near the horizon (i.e., locality is not violated),

(ii-b) low energy effective field theory is valid outside a region microscopically close to the horizon (i.e., physics behaves as we think it should under conditions where we’ve tested it extensively),

(iii) the infalling observer encounters nothing unusual at the horizon.

The AMPS paper opts for (iii) being the incorrect assumption, and arrives at firewalls.

Talking with people from the it-from-qubit crowd, many of them seem to believe that (ii-a) is the incorrect assumption. Maybe this makes research easier … if there’s non-locality, you can just assume that we know nothing about the laws of physics in the AdS bulk theory, and only work on the physics in the boundary CFT. And conformal field theory is much better understood than quantum gravity, although I suspect that you can only go so far without thinking about how the laws of physics might work for the AdS part.

Taking (ii-b) as the incorrect assumption presumably would contradict over a century of physics experiments—as far as I can tell, nobody advocates this.

And finally, taking (i) as the incorrect assumption seems to be anathema. I suspect that this is because AdS-CFT is taken to be axiomatic—they have 20 years of work invested in it—and the conventional wisdom says that AdS-CFT proves unitarity. Is the conventional wisdom correct about this? Beats me, but as far as I know, nobody is thinking about whether it might be wrong.

Is there really nobody thinking about wheter [AdS-CFT proves unitarity] is wrong?

To say the least Gerard ‘t Hooft is suspicious about it:

https://arxiv.org/abs/1902.10469

Incidentally Utrecht University will host an international conference entitled “’t Hooft 2019 – From Weak Force to Black Hole Thermodynamics and Beyond” beginning Thursday July 11 until Saturday July 13 with the following advert:

https://thooft2019.sites.uu.nl/speakers/

Looking at https://cds.cern.ch/record/2668388 it is instructive to watch how difficult it is for physicists to agree on the basics of the modelisation of the problem they want to deal with.

Actually I didn’t point to the video I was thinking about. https://cds.cern.ch/record/2668988 was the discussion cession about black hole information at CERN in march 2019 I had in mind.

All,

I’m shutting off comments here, partly because people seem to want to get into a not obviously well-informed discussion of the details of AdS/CFT, and partly because I’m leaving for Chile now, will be traveling the next couple weeks.